Expanding the vision of quantum computing

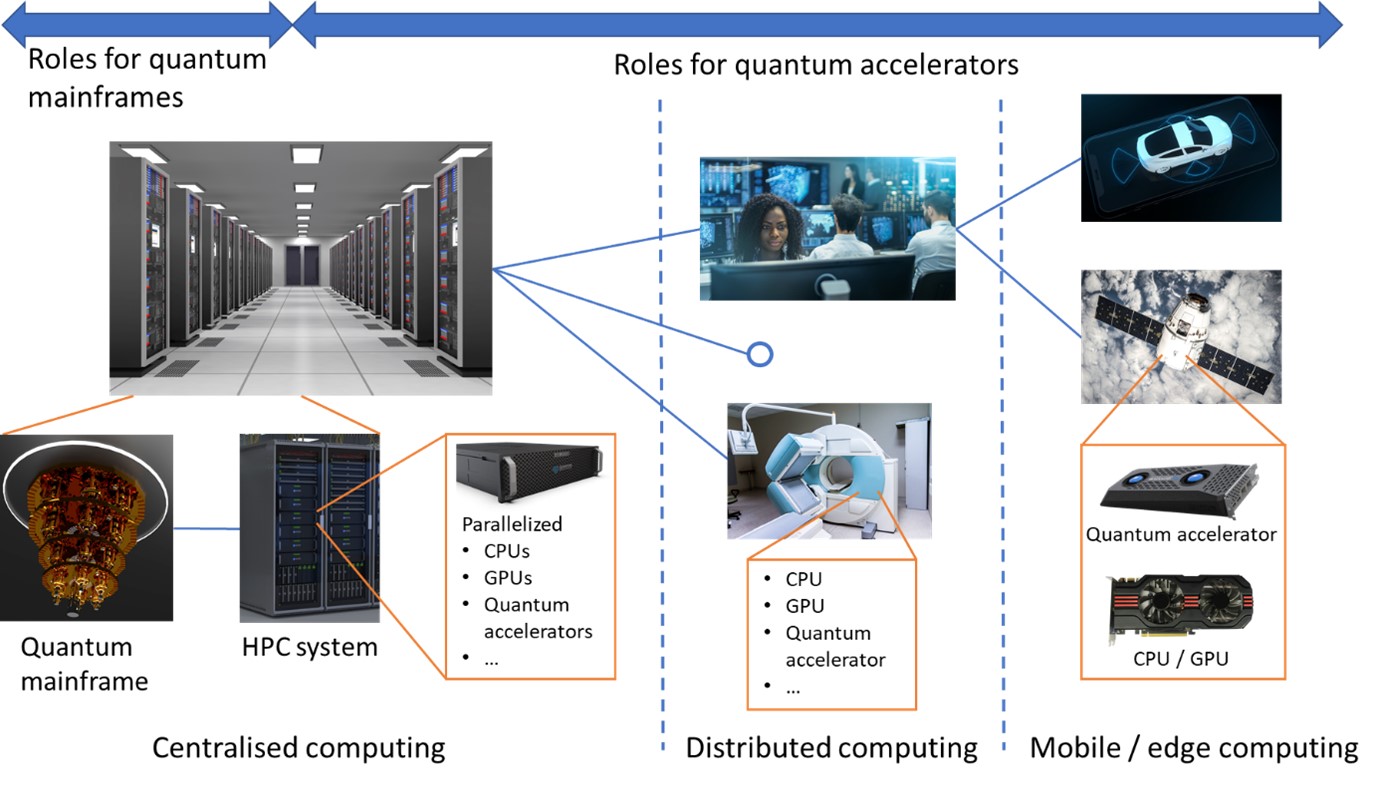

The prevailing picture of a quantum computer is one of a quantum mainframe that competes with classical supercomputers to solve only the most difficult problems. The origins of this picture being the various quantum hardware technologies of today that are constrained to mainframe roles because they are large and fragile machines that require ultra-low temperatures and/or pressures and complex control systems to operate. Similar to the classical mainframes of the 1960s, the future of quantum mainframes is likely to be one where there are a few quantum mainframes in each supercomputing and cloud computing facility around the world, but otherwise not widely employed.

What changed this picture for classical computing was the advent of the microprocessor, which enabled classical computers to dramatically reduce their size, weight and power and so become widely distributed, connected and parallelized, and eventually mobile. In addition to leaps in microprocessor hardware, the classical computing revolution of the 20th century also demanded leaps in how we thought about employing computers, what we applied them to and the software architectures we created to orchestrate them. In the 21st century, a similar revolution in hardware, software and thinking is required for quantum computers to break out of the mainframe and dramatically broaden their roles and applications.

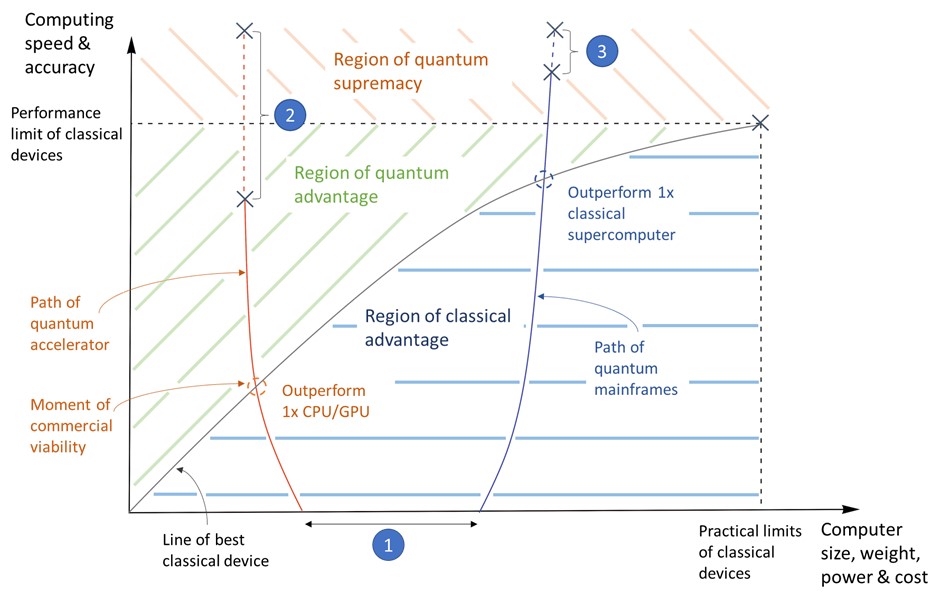

If you switch your picture of a quantum computer from a quantum mainframe to a quantum accelerator card that is small enough for you to hold in your hands, then your ideas about how quantum computers can be employed, what they can be applied to and when they will be useful, dramatically change. Instead of asking “When will this quantum computer outperform a classical supercomputer?”, you will ask “When will this quantum computer outperform that CPU or GPU in my desktop computer for that task?”. Instead of asking “How do I redesign my supercomputer facility to accommodate a quantum computer?”, you will ask “How do I integrate hundreds of quantum computers into the racks of my current supercomputer?“. You may even begin to ask “What if my satellite, vehicle, manufacturing plant, or desktop computer had one or more quantum computers accelerating certain tasks or making some tasks possible for the first time?“.

Quantum Brilliance is developing quantum accelerators

Quantum Brilliance is an Australian-German company whose aim is to deliver quantum accelerators to the world and to support those who aim to discover and develop their applications, build the software architectures to employ them and integrate them into computing systems. In doing so, Quantum Brilliance is seeking to make quantum computers useful sooner, by focusing on outperforming CPUs and GPUs of comparable size, weight and power, and to dramatically expand their scope of applications by making them robust enough for massive-parallelization with classical computers in computing centers and deployment in mobile platforms.

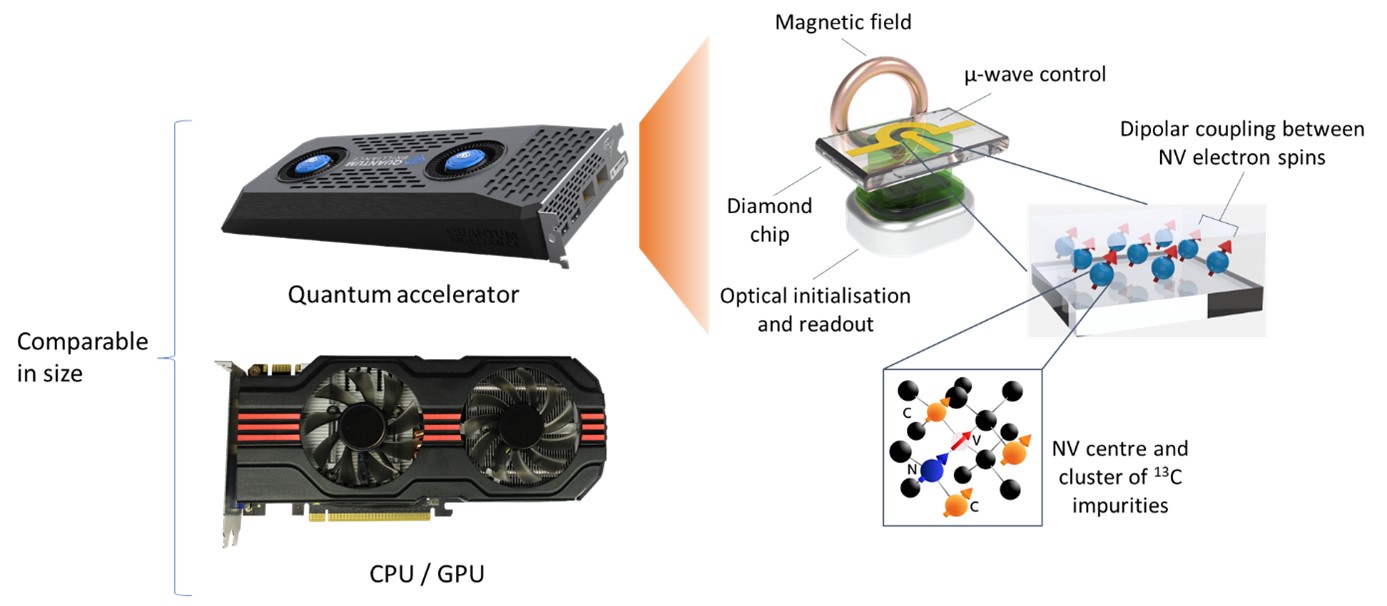

Quantum Brilliance’s accelerators are based on diamond quantum computers, which can operate in ambient conditions with comparatively simple control systems, whilst delivering competitive performance. Room-temperature diamond quantum computing is not new. In its 20+-year history [1], German researchers have pioneered impressive achievements, including demonstrations of quantum algorithms [2], quantum simulations [3][4], quantum error correction [5][6] and high-fidelity operations [5][6][7]. But, in recent years, it has not scaled past a handful of qubits due to challenges with qubit fabrication yield and precision. Quantum Brilliance’s core innovations address this barrier to scaling as well as the miniaturization and integration of control structures that is crucial to realizing chip-scale quantum microprocessors.

In the following, I further introduce diamond quantum computing and the pathway to quantum accelerators being pursued by Quantum Brilliance, discuss the employment and applications of quantum accelerators, as well as the opportunities for researchers and industry to engage with quantum accelerators today.

The road to quantum accelerators

Room-temperature diamond quantum computers consist of an array of processor nodes (see concept diagram in figure 3). Each processor node is comprised of a nitrogen-vacancy (NV) center (a defect in the diamond lattice consisting of a substitutional nitrogen atom adjacent to a vacancy) and a cluster of nuclear spins: the intrinsic nitrogen nuclear spin and up to ~4 nearby 13C nuclear spin impurities. The nuclear spins act as the qubits of the computer, whilst the NV centers act as quantum buses that mediate the initialization and readout of the qubits, and intra- and inter-node multi-qubit operations. Quantum computation is controlled via radiofrequency, microwave, optical and magnetic fields.

Room-temperature and pressure operation is owed to the remarkable properties of the NV center [8]. Namely, its optical electron spin initialization and readout mechanism that retains high fidelity and contrast under simple off-resonance illumination in ambient conditions as well as its long electron spin coherence time (~1 ms), which is the longest of any solid-state electron at room temperature. These properties allow the NV center to operate effectively as a quantum bus that can initialize, read out and connect the otherwise weakly interacting and highly-coherent nuclear spin qubits.

Thus far, diamond quantum computation using three qubits within a single node or two qubits in two nodes has been performed. Demonstrated initialization and readout fidelities exceed 99.6% [5], whilst single and two qubit gate fidelities exceed 99.99% and 99%, respectively, with corresponding gate times ranging up to ~10 µs [5][6][7]. Recent work shows that gate fidelities exceeding 99.999% and gate times below ~1 µs are possible with more advanced quantum control techniques [9], which will enable diamond to cross thresholds for fault-tolerant operation and achieve large circuit depths.

Key to scaling beyond a handful of qubits and nodes is the precise fabrication of arrays of NV centers that are separated by a few nanometers. This precision is required to magnetically-couple the electron spins of the NV centers so that they may mediate the inter-node multi-qubit operations. However, this precision cannot be achieved with high yield using the existing ‘top-down’ nitrogen ion-implantation techniques for creating NV centers, owing to the limits of implantation mask fabrication and the scattering of implanted ions [10].

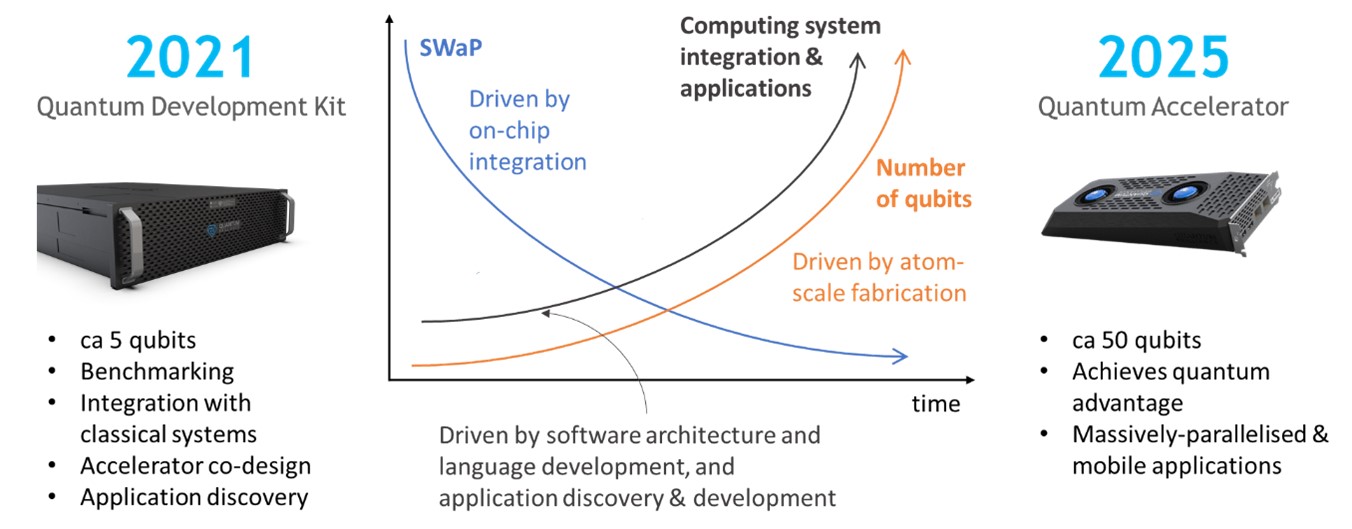

One of Quantum Brilliance’s key inventions is a ‘bottom-up’ atomically-precise fabrication technique for diamond that circumvents these limitations through designer surface chemistry and lithography. The technique draws inspiration from the atom-scale fabrication techniques for silicon that were pioneered in Australia [11]. Another of Quantum Brilliance’s key inventions is the integrated quantum chip that miniaturizes and integrates the electrical, optical and magnetic control systems of diamond quantum computers. The combination of these two inventions enables the simultaneous scaling-up of qubit numbers with scaling-down of the total size, weight and power of diamond quantum computers, and thus the realization of compact and robust quantum accelerators for mobile and parallelized applications.

Quantum Brilliance’s goal is to build quantum accelerators containing >50 qubits that outperform CPUs/GPUs of comparable size, weight and power in important applications within the next 5 years. In 2021, Quantum Brilliance will deliver its first Quantum Development Kits (QDKs), which will contain up to 5 qubits in a 19-inch rack-mountable unit. These QDKs will support benchmarking, hardware and software integration with classical computing systems, accelerator co-design and application discovery activities with Quantum Brilliance’s customers and R&D partners. Over the next five years, the QDKs will be progressively upgraded with more qubits, reduced in size and ruggedized, through the implementation of atomically-precise diamond fabrication and on-chip control system integration.

Alongside its hardware development, Quantum Brilliance is developing software architectures and high-performance emulators to help users develop and test software for the integration and application of quantum accelerators and to evaluate their current and future performance. Quantum Brilliance’s current software architecture is based upon the XACC framework developed specifically for quantum accelerators by Quantum Brilliance’s collaborators at the Oak Ridge National Laboratory [12]. Quantum Brilliance’s quantum emulator is distinguished from other quantum simulators by its detailed model of diamond quantum computers (e.g. qubit topology, native operations, errors and operation times) and scalability on high-performance computing systems. This enables users to experience the behavior and performance of current and future quantum accelerator hardware at close to full scale in qubit number.

The employment and applications of quantum accelerators

Like quantum computing more broadly, the roles and applications of quantum accelerators are early in their discovery and development. Here, I provide some examples of applications that illustrate how massively-parallelized and mobile quantum accelerators can deliver significant advantages and broaden the roles and applications of quantum computers.

Massively-parallelized quantum accelerators promise a leap in the simulation of molecular dynamics (MD). MD simulations are used extensively to model large molecules or large numbers of interacting molecules to study phenomena like the structure, conformations and interactions of biomolecules and molecular diffusion, transport and interactions with solid interfaces [13]. They have applications in drug design, chemical synthesis, energy storage and nanotechnology [13].

Conventional MD techniques treat the atoms of the molecules as point particles and employ effective potentials and forces to solve the classical dynamics of the atoms. This has a variety of critical limitations: (1) by assuming adiabaticity, excited states of the molecules are ignored, which are often pivotal in interactions, reactions and energy transfer, (2) the use of restricted sets of empirical or ab initio data for the effective potentials and forces reduces accuracy and generalizability, and (3) bond breaking and formation is ignored, which prevents modeling of chemical reactions [13].

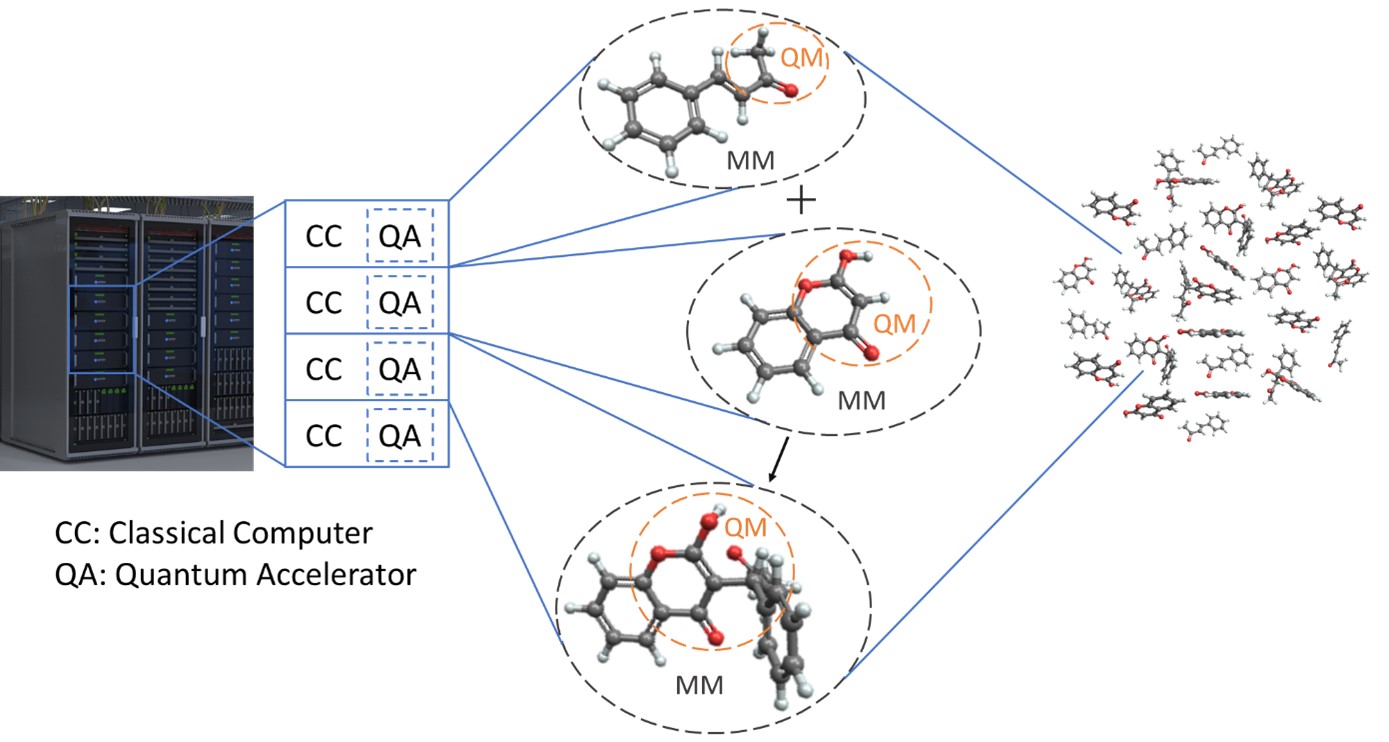

The Quantum Mechanics/ Molecular Mechanics (QM/MM) formulism of MD was invented to overcome these limitations of conventional techniques [13]. In QM/MM, molecules are divided into parts that are to be treated using quantum mechanics (i.e. reaction sites of proteins, reactive groups of molecules) and treated using conventional molecular mechanics. In this way, the accuracy and generality of QM calculations is gained for the important parts, whilst retaining the speed of MM calculations for the other parts.

The trouble is that the QM calculations are computationally expensive on classical computers, which severely limits the utility of QM/MM approaches today. The obvious solution is to split the QM and MM calculations between quantum accelerators and classical computers, respectively, and exploit the significant efficiency gains of quantum computing methods for quantum chemistry calculations [14]. By parallelizing over many quantum accelerators, rather than just one quantum mainframe, QM/MM simulations of systems of many interacting molecules becomes possible. Thus, enabling a dramatic increase in the size, accuracy and scope of MD simulations. For example, the study of multiple reactions and conformation changes within a system of biochemicals in drug design or the impact of reaction byproducts during chemistry at a solid-liquid interface in catalysis design.

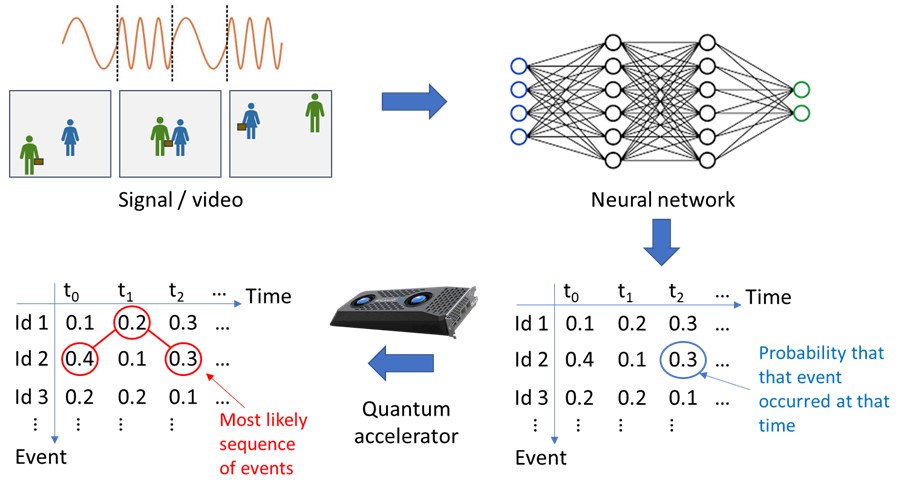

Mobile quantum accelerators promise a leap in signal and image processing in autonomous and intelligent technologies at the network edge. A key ingredient to automated and intelligent technologies is the processing of signals and images to identify features and the most-likely sequence of events. Current AI/ML solutions are inefficient at dealing with the combinatorial explosion of possibilities and correlations between events at different times [15]. In edge computing, where computing resources and time are constrained, they must introduce severe approximations (e.g. ignoring correlations), which introduce inaccuracies [15]. Various high-value applications in defence, autonomous vehicles etc cannot accept these inaccuracies and thus are seeking a solution. For example, speech-to-text or signal-to-text conversion for human-machine teaming, or feature and behavior identification in satellite/field intelligence and surveillance (see figure 5).

A promising solution is the Hybrid ML-Quantum Decoder, which is a combination of a neural network operating on a classical computer and a quantum decoding algorithm operating on a quantum accelerator. The neural network accepts a signal or video and generates a high-correlated probability time series of events/ feature identifications. The quantum accelerator then implements the quantum decoder algorithm to efficiently find the most-likely sequence of events [15]. This hybrid approach is distinguished by the benefits of:

(1) a dramatic speed-up that avoids the approximations and inaccuracies made by current classical technologies, since the quantum decoder algorithm is up to 𝑛^5 more efficient than the best classical algorithms (depending on the quality of the neural network’s output) [15]

(2) addressing the memory constraints of quantum computers by using the neural network to filter and compress the large amounts of data in the signal/ video into a dense output that can be encoded in and processed by a modest number of qubits

(3) adaptability, because the neural network can be re-built/-trained for different applications, whilst the quantum decoder algorithm and accelerator configuration remain the same.

Opportunities for industry and research

At this early stage of quantum accelerators, there are numerous opportunities for researchers and companies to undertake novel high-impact science and development of high-value products and applications. Opportunities include:

- Discovery and development of applications of quantum accelerators.

- Design and development of programming languages and software to efficiently employ, manage and optimize integrated clusters of classical computers and quantum accelerators.

- Design and development of software to optimize the compiling of programs for and performance of quantum accelerators.

- Co-design and -development of quantum accelerator hardware for targeted applications.

Importantly, these opportunities lie in fields that may have previously discounted quantum computing as being not applicable, impractical or inaccessible due to the prevailing picture of cloud-access to a few mainframe quantum supercomputers. Thus, quantum accelerators have the potential to reopen as well as add various scientific disciplines, industries and defence sectors to the advantages of quantum computing by enabling massively-parallelized and mobile quantum computing.

If you wish to learn more about how your science or industry can benefit from using quantum accelerators, or if you wish to learn more about how you can engage in R&D of quantum accelerators and their applications, then I encourage you to contact Quantum Brilliance.

Acknowledgements

Figures prepared by Dr Johannes Kostka, Quantum Brilliance.

References

[1] J. Wrachtrup, S.Ya Kilin and A.P. Nizovtsev, Optics and Spectroscopy 91, 459 (2001).

[2] K. Xu et al Physical Review Letters 118, 130504 (2017).

[3] Y. Wang et al ACS Nano 9, 7769 (2015).

[4] F. Kong et al Physical Review Letters 117, 060503 (2016).

[5] G. Waldherr et al Nature 506, 204 (2014).

[6] T. Taminiau et al Nature Nanotechnology 9, 171 (2014).

[7] X. Rong et al Nature Communications 6, 8748 (2015).

[8] M.W. Doherty et al Physics Reports 258, 1 (2013).

[9] Y. Chen, S. Stearn, S. Vella, A. Horsley and M.W. Doherty, New Journal of Physics 22, 093068 (2020).

[10] See for example: I. Bayn et al Nano Letters 15, 1751 (2015).

[11] M Fuechsle et al Nature Nanotechnology 7, 242 (2012).

[12] A.J. McCaskey, D.I. Lyakh, E.F. Dumitrescu, S.S. Powers and T.S. Humble, Quantum Science and Technology 5, 024002 (2020).

[13] P. Atkins and R Friedman, Molecular Quantum Mechanics (Oxford University Press: Oxford, 2005); C.J. Cramer, Essentials of Computational Chemistry: Theories and Models (Wiley: West Sussex, 2004).

[14] See for example: C. Hempel et al Physical Review X 8, 031022 (2018).

[15] J. Bausch, S. Subramanian and S. Piddock, arXiv:1909.05023 (2020).

Um einen Kommentar zu hinterlassen müssen sie Autor sein, oder mit Ihrem LinkedIn Account eingeloggt sein.